Contribute code

Integrate changes into OSRD

This chapter is about the process of integrating changes into the common code base. If you need help at any stage, open an issue or message us.

OSRD application is split in multiple services written in several languages. We try to follow general code best practices and follow each language specificities when required.

1 - General principles

Please read this first!

- Explain what you’re doing and why.

- Document new code with doc comments.

- Include clear, simple tests.

- Break work into digestible chunks.

- Take the time to pick good names.

- Avoid non well-known abbreviations.

- Control and consistency over 3rd party code reuse: Only add a dependency if it is absolutely necessary.

- Every dependency we add decreases our autonomy and consistency.

- We try to keep PRs bumping dependencies to a low number each week in each component, so grouping

dependency bumps in a batch PR is a valid option (see component’s

README.md). - Don’t reinvent every wheel: as a counter to the previous point, don’t reinvent everything at all costs.

- If there is a dependency in the ecosystem that is the “de facto” standard, we should heavily consider using it.

- More code general recommendations in main repository CONTRIBUTING.md.

- Ask for any help that you need!

Consult back-end conventions ‣

Consult front-end conventions ‣

Continue towards write code ‣

Continue towards tests ‣

2 - Back-end conventions

Coding style guide and best practices for back-end

Python

Python code is used for some packages and integration testing.

Rust

- As a reference for our API development we are using the Rust API guidelines.

Generally, these should be followed.

- Prefer granular imports over glob imports like

diesel::*. - Tests are written with the built-in testing framework.

- Use the documentation example to know how to phrase and format your documentation.

- Use consistent comment style:

/// doc comments belong above #[derive(Trait)] invocations.// comments should generally go above the line in question, rather than in-line.- Start comments with capital letters. End them with a period if they are sentence-like.

- Use comments to organize long and complex stretches of code that can’t sensibly be refactored into separate functions.

- Code is linted with clippy.

- Code is formatted with fmt.

Java

3 - Front-end conventions

Coding style guide and best practices for front-end

We use ReactJS and all files must be written in Typescript.

The code is linted with eslint, and formatted with prettier.

Nomenclature

The applications (osrd eex, osrd stdcm, infra editor, rolling-stock editor) offer views (project management, study management, etc.) linked to modules (project, study, etc.) which contain the components.

These views are made up of components and sub-components all derived from the modules.

In addition to containing the views files for the applications, they may also contain a scripts directory which offers scripts related to these views. The views determine the logic and access to the store.

Modules are collections of components attached to an object (a scenario, a rolling stock, a TrainSchedule). They contain :

- a components directory hosting all components

- an optional styles directory per module for styling components in scss

- an optional assets directory per module (which contains assets, e.g. default datasets, specific to the module)

- an optional reducers file per module

- an optional types file per module

- an optional consts file per module

An assets directory (containing images and other files).

Last but not least, a common directory offering :

- a utils directory for utility functions common to the entire project

- a types file for types common to the entire project

- a consts file for constants common to the entire project

Implementation principles

Naming

The following conventions are generally used in the code:

- components and types are in PascalCase

- variables are in camelCase

- translation keys are also in camelCase

- constants are in SCREAMING_SNAKE_CASE (except in special cases)

- CSS classes are in kebab-case

Styles & SCSS

WARNING: in CSS/React, the scope of a class does not depend on where the file is imported, but is valid for the entire application. If you import an scss file in the depths of a component (which we strongly advise against), its classes will be available to the whole application and may therefore cause side effects.

It is therefore highly recommended to be able to easily follow the tree structure of applications, views, modules and components also within the SCSS code, and in particular to nest class names to avoid edge effects.

Some additional conventions:

- All sizes are expressed in px, except for fonts which are expressed in rem.

- We use the classnames library to conditionally apply classes: each class is separated into a string, and Boolean or other operations are performed in an object that will return—or not—the property name as the class name to be used in CSS.

Store/Redux

The store allows you to store data that will be accessible anywhere in the application. It is divided into slices, which correspond to our applications.

However, we must be careful that our store does not become a catch-all.

Adding new properties to the store must be justified by the fact that the data in question is “high-level” and will need to be accessible from locations far from each other, and that a simple state or context variable is not appropriate for storing this information.

For more details about redux, please refer to the official documentation.

We use Redux ToolKit to make our calls to the backend.

RTK allows you to make API calls and cache responses. For more details, please refer to the official documentation.

The backend endpoints and types are generated directly from the editoast open API and stored in generatedOsrdApi (using the npm run generate-types command). This generated file is then enriched with other endpoints or types in osrdEditoastApi, which can be used in the application. Note that it is possible to transform mutations into queries when generating generatedOsrdApi by modifying the openapi-editoast-config file.

When an endpoint call must be skipped because a variable is not defined, RTK’s skipToken is used to avoid having to use casts.

Translation

Application translation is performed on Weblate.

Imports

It is recommended that you use the full path for each import, unless your import file is in the same directory.

ESLint is setup to automatically sort imports in four import groups, each of them separated by an empty line and sorted in alphabetical order :

- React

- External libraries

- Internal absolute path files

- Internal relative path files

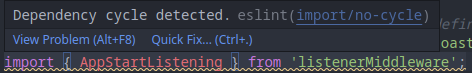

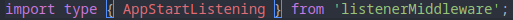

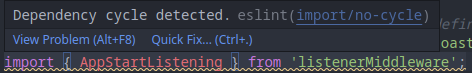

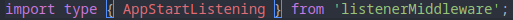

Regarding type imports/exports, ESLint and Typescript are configured to automatically add type before a type import, which allows to:

- Improve the performance and analysis process of the compiler and the linter.

- Make these declarations more readable; we can clearly see what we are importing.

- Make final bundle lighter (all types disappear at compilation)

- Avoid dependency cycles (ex: the error disappears with the

type keyword)

4 - Write code

Integrate changes into OSRD

If you are not used to Git, follow this tutorial

Create a branch

If you intend to contribute regularly, you can request access to the main repository. Otherwise, create a fork.

Add changes to your branch

Before you start working, try to split your work into macroscopic steps.

At the end of each stop, save your changes into a commit.

Try to make commits of logical and atomic units.

Try to follow style conventions.

Keep your branch up-to-date

git switch <your_branch>

git fetch

git rebase origin/dev

Continue towards commit style ‣

5 - Commit conventions

A few advises and rules about commit messages

Commit style

The overall format for git commits is as follows:

component1, component2: imperative description of the change

Detailed or technical description of the change and what motivates it,

if it is not entirely obvious from the title.

- the commit message, just like the code, must be in english (only ASCII characters for the title)

- there can be multiple components separated by

: in case of hierarchical relationships, with , otherwise - components are lower-case, using

-, _ or . if necessary - the imperative description of the change begins with a lower-case verb

- the title must not contain any link (

# is forbidden)

Ideally:

- the title should be self-explanatory: no need to read anything else to understand it

- the commit title is all lower-case

- the title is clear to a reader not familiar with the code

- the body of the commit contains a detailed description of the change

An automated check is performed to enforce as much as possible this formatting.

Counter-examples of commit titles

To be avoided entirely:

component: update ./some/file.ext: specify the update itself rather than the file, the files

are technical elements welcome in the body of the commitcomponent: fix #42: specify the problem fixed in the title, links (to issue, etc.) are very

welcome in commit’s bodywip: describe the work (and finish it)

Welcome to ease review, but do not merge:

fixup! previous commit: an autosquash must be run before the mergeRevert "previous commit of the same PR": both commits must be dropped before merging

The Developer Certificate of Origin (DCO)

All of OSRD’s projects use the DCO (Developer Certificate of Origin) to address

legal matters. The DCO helps confirm that you have the rights to the code you

contribute. For more on the history and purpose of the DCO, you can read The

Developer Certificate of Origin

by Roscoe A. Bartlett.

To comply with the DCO, all commits must include a Signed-off-by line.

How to sign a commit using git in a shell ?

To sign off a commit, simply add the -s flags to your git commit command,

like so:

git commit -s -m "Your commit message"

This also applies when using the git revert command.

How to do sign a commit using git in Visual Studio Code (VS Code) ?

Now, go in Files -> Preferences -> Settings, search for and activate

the Always Sign Off setting.

Finally, when you’ll commit your changes via the VS Code interface, your commits

will automatically be signed-off.

Continue towards sharing your changes ‣

6 - Share your changes

How to submit your code modifications for review?

The author of a pull request (PR) is responsible for its “life cycle”. He is responsible for contacting the various parties involved, following the review, responding to comments and correcting the code following review (you could also check dedicated page about code review).

Open a pull request

Once your changes are ready, you have to request integration with the dev branch.

If possible:

- Make PR of logical and atomic units too (avoid mixing refactoring, new features and bug fix at the same time).

- Add a description to PRs to explain what they do and why.

- Help the reviewer by following advice given in mtlynch article.

- Add tags

area:<affected_area> to show which part of the application have been impacted. It can be done through the web interface.

Take feedback into account

Once your PR is open, other contributors can review your changes:

- Any user can review your changes.

- Your code has to be approved by a contributor familiar with the code.

- All users are expected to take comments into account.

- Comments tend to be written in an open and direct manner.

The intent is to efficiently collaborate towards a solution we all agree on.

- Once all discussions are resolved, a maintainer integrates the change.

The best case is to avoid large PR and split it in multiple PR:

- ease the reviewing process and might accelerate it (easier to find an hour to review than half a day)

- is more agile, you will get feedback on the early iteration before proposing the next series of modifications,

- keep the git history cleaner (in case of a

git bisect looking for a regression for example).

In the case where you cannot avoid a large PR, don’t hesitate to ask several reviewers to organize themselves, or even to carry out the review together, reviewers and author.

For large PRs that are bound to evolve over time, keeping corrections during review in separate

commits helps reviewers. In the case of multiple reviews by the same person, this can save full

re-review (ask for help if necessary):

- If you believe somebody forgot to review / merge your change, please speak out, multiple times if needs be.

Review cycle

A code review is an iterative process.

For a smooth review, it is imperative to correctly configure your github notifications.

It is advisable to configure OSRD repositories as “Participating and @mentions”. This allows you to be notified of activities only on issues and PRs in which you participate.

Maintainers are automatically notified by the CODEOWNERS system. The author of a PR is responsible for advancing their PR through the review process and manually requesting maintainer feedback if necessary.

sequenceDiagram

actor A as PR author

actor R as Reviewer/Maintainer

A->>R: Asks for a review, notifying some people

R->>A: Answers yes or no

loop Loop between author and reviewer

R-->>A: Comments, asks for changes

A-->>R: Answers to comments or requested changes

A-->>R: Makes necessary changes in dedicated "fixups"

R-->>A: Reviews, tests changes, and comments again

R-->>A: Resolves requested changes/conversations if ok

end

A->>R: Rebase and apply fixups

R->>A: Checks commits history

R->>A: Approves the PR

Note right of A: Add to the merge queueFinally continue towards tests ‣

7 - Tests

Recommendations for testing purpose

Back-end

- Integration tests are written with pytest in the

/tests folder. - Each route described in the

openapi.yaml files must have an integration test. - The test must check both the format and content of valid and invalid responses.

Front-end

The functional writing of the tests is carried out with the Product Owners, and the developers choose a technical implementation that precisely meets the needs expressed and fits in with the recommendations presented here.

We use Playwright to write end-to-end tests, and vitest to write unit tests.

The browsers tested are currently Firefox and Chromium.

Basic principles

- Tests must be short (1min max) and go straight to the point.

- Arbitrary timeouts are outlawed; a test must systematically wait for a specific event. It is possible to use polling (retry an action - a click for example - after a certain time) proposed in the Playwright’s API.

- All tests must be parallelizable.

- Tests must not point to or wait for text elements from the translation, prefer the DOM tree structure or place specific

id. - We’re not testing the data, but the application and its functionality. Data-specific tests should be developed in parallel.

Data

The data tested must be public data.

The data required (infrastructure and rolling stock) for the tests are offered in the application’s json files, injected at the start of each test and deleted at the end, regardless of its result or how it is stopped, including with CTRL+C.

This is done by API calls in typescript before launching the actual test.

The data tested is the same, both locally and via continuous integration.

End-to-End (E2E) Test Development Process

E2E tests are implemented iteratively and delivered alongside feature developments. Note that:

- E2E tests should only be developed for the application’s critical user journeys.

- This workflow helps prevent immediate regressions after a feature release, enhances the entire team’s proficiency in E2E testing, and avoids excessively long PRs that would introduce entire E2E test suites at once.

- It is acceptable for E2E tests to be partial during development, even if their implementation increases ticket size and development time.

- Some parts of the tests will need to be mocked while the feature is still under development. However, by the end of development, the E2E test must be complete, and all mocked data should be removed. The final modifications to eliminate mocking should be minimal (typically limited to updating expected values).

- When adding a new feature, it is preferable to separate the implementation of the new feature and the tests into individual commits, to facilitate review.

- Test cases and user journeys should be defined in advance, during ticket refinement, before the PIP. They may be proposed by a QA or a Product Owner (PO) and must be validated by a QA, the relevant PO, and frontend developers.

- If an E2E test affects the E2E testing configuration, project architecture (e.g., snapshotting), or poses a risk of slowing down the CI, a refinement workshop must be organized to consult the team responsible for project architecture and CI, particularly the DevOps team.

Atomicity of a test

Each test must be atomic: it is self-sufficient and cannot be divided.

A test will target a single feature or component, provided it is not too large. A test will not test an entire module or application; it will necessarily be a set of tests, in order to preserve test atomicity.

If a test needs elements to be created or added, these operations must be carried out by API calls in typescript upstream of the test, as is done for adding data. These elements must be deleted at the end of the test, regardless of the result or how it is stopped, including by CTRL+C.

This allows tests to be parallelized.

However, in certain cases where it is relevant, a test may contain several clearly explained and justified test subdivisions (several test() in a single describe()).

Example of a test

The requirement: “We want to test the addition of a train to a timetable”.

- add the test infrastructure and rolling stock to the database by API calls.

- create project, study and scenario with choice of test infrastructure by API calls.

- start the test, clicking on “add one or more trains” until the presence of the trains in the timetable is verified

- the test passes, fails or is stopped, the project, study and scenario are deleted, along with the test rolling stock and infrastructure by API calls.

NB: the test will not test all the possibilities offered by the addition of trains; this should be a specific test which would test the response of the interface for all scenarios without adding trains.

Continue towards write code ‣